Beyond the Pilot Phase: Designing AI-Ready Institutions

There are people who talk about AI in higher education, and there are people who have lived inside higher education long enough to know why the talking rarely becomes ‘doing’. Robert J. Clougherty, Ph.D., is the latter. He has been a tenured full professor, a graduate program chair, a provost, a CIO, a vice president, and a co-founder — including Glasgow Caledonia New York, where he secured degree-granting authority and Middle States accreditation from scratch. He has sat in faculty senate meetings. He has been the person in the room when the skeptics show up.

That depth of experience is exactly what drew him to Edge, and what makes his new role as AI Lead such a natural fit for the moment Edge's member institutions now face.

"The through line really has always been meaning — how people make meaning, how institutions create the conditions for it, and what happens when technology either supports or disrupts that process."

– Robert J. Clougherty, Ph.D.

AI Lead

Edge

His Ph.D. focused on semiotics — the study of how meaning is made — and it is a lens he has never set down. It is also why he named his personal consulting practice Recursive Meaning AI. In his view, the arrival of large language models is not a discontinuity from the academic tradition; it is a deep extension of it. Shares Bob, "AI isn't just a subject. It's also an object of study, and it offers great opportunities to faculty and students."

Why Edge, Why Now

Edge has long served New Jersey's higher education community as a trusted infrastructure partner — networking, cloud services, cybersecurity, procurement. But Clougherty sees the AI moment as categorically different from those earlier pivots.

"It touches everything at once — academics, operations, governance, workforce, student experience. Campuses don't just need a new tool or a new vendor. They need a trusted partner who understands higher education's mission, culture, and constraints."

– Robert J. Clougherty, Ph.D.

Serving as a trusted infrastructure partner is Edge's DNA. Most institutions know they need an AI strategy but are caught between the pressure to act and the uncertainty of how to act. Edge is positioned to help them close that gap — not by telling campuses what to buy, but by helping them build the capacity to decide wisely.

The Coordination Problem — and What it Actually Costs

EdgeAI frames its mission around a pointed diagnosis: most campuses don't have an AI problem so much as a coordination problem.

"Most campuses really don't lack for AI tools," explains Bob. "They're drowning in them. What they lack is the connective tissue — the shared decision rights, the data readiness, the institutional trust to move forward together."

In a recent piece published in TechTarget, Clougherty traces this problem to what he calls the "software age" — the 1990s and early 2000s wave of technology spending in which departments bought tools to solve local problems, while central IT was left maintaining an uncoordinated ecosystem rather than designing an enterprise. The result, he argues, is a hidden tax that campuses are still paying today: in meetings, workarounds, data silos, and processes that were never designed to talk to each other.

AI, he warns, risks repeating exactly that pattern — only faster, and with higher stakes. "If the AI age becomes the software age, but with chat, we will simply add another stack of bricks and call it transformation."

To avoid that outcome, Clougherty describes what he calls an "AI mushroom" phenomenon — a patchwork of disconnected pilots that spring up across campus with no coherent logic. In a piece for Higher Education Digest, he explored how CIOs and provosts are navigating exactly this dynamic: tools proliferating faster than governance can keep up, leaving institutions with isolated experiments but no enterprise strategy.

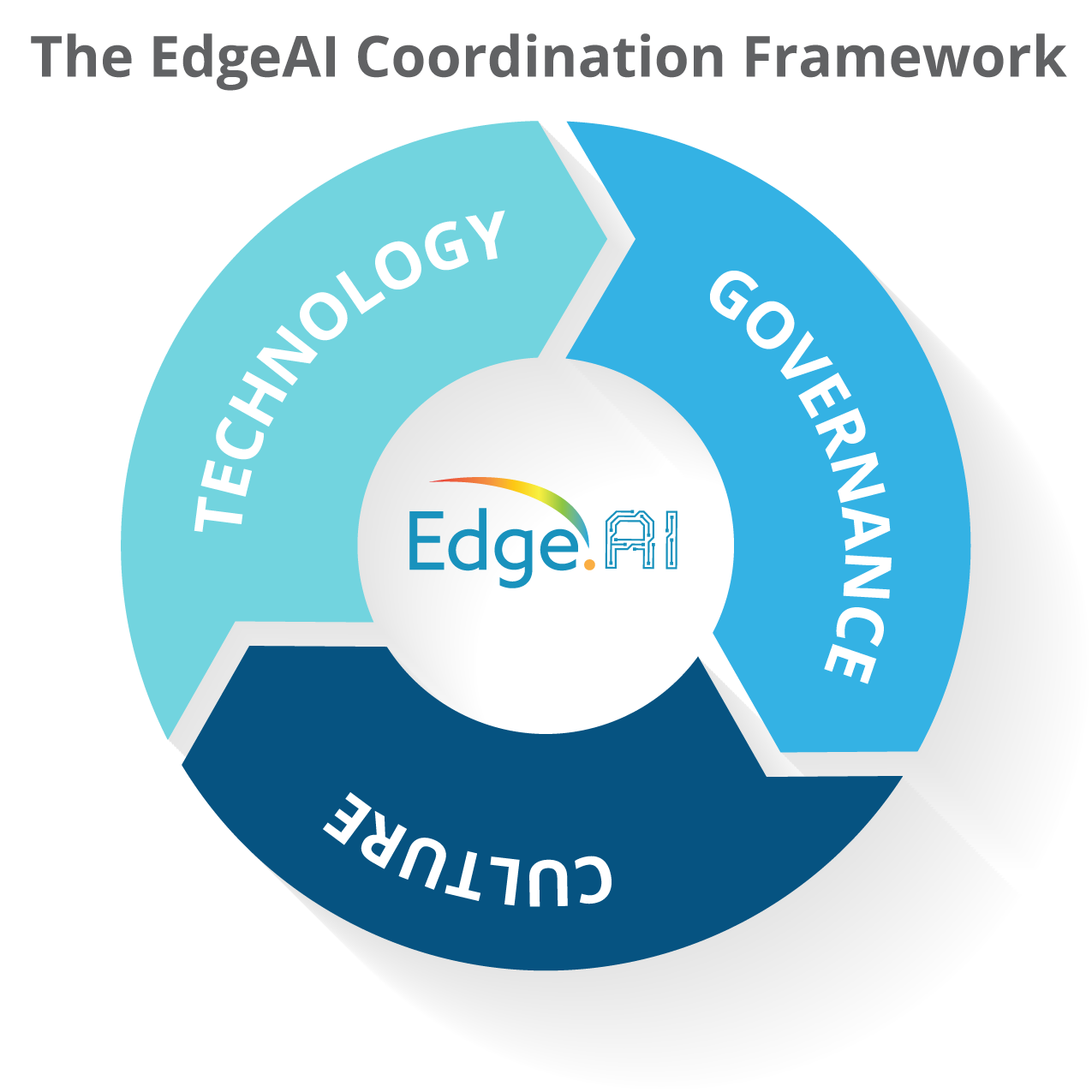

The antidote, in his framework, is a triangle.

"Technology without governance gives you the mushrooms," he explains. "Governance without culture becomes compliance theater. Culture without technology becomes talk and fatigue. You have to move all three together." Getting coordination right, in practice, means starting with mission rather than tools, establishing clear decision rights early — who approves an AI use case, who owns the data, what is the risk tier — and investing in the human side with listening sessions, role-based training, and honest conversations about what AI can and cannot do.

From Pilot Graveyard to Enterprise Impact

If the coordination problem explains why AI stalls at the campus level, the pilot graveyard problem explains why it stalls at the project level. Clougherty sees this pattern constantly: promising experiments that go nowhere because there is no defined end goal, no clear success criteria, no evaluation rhythm, and no pathway to scale.

"You end up in a situation where nobody can explain what 'working' actually means," he says. "An AI strategy that reads like a procurement plan is a recipe for disaster."

He is equally clear about a second trap: the belief that AI will fix existing problems. It won't. AI amplifies what it encounters. If an institution is data-siloed, AI will exaggerate the silos. If the data is poor quality, the outputs will reflect that — with greater confidence. If processes are misaligned, AI will institutionalize and automate the misalignment. Getting the foundation right first is not optional.

Breaking through to enterprise-level impact, Clougherty says, requires three things: a roadmap grounded in institutional mission rather than vendor demos; an evaluation framework that tells you when a pilot is ready to scale — and when it should be retired; and a governance structure that gives people the confidence to make decisions. That, he says, is precisely what EdgeAI is designed to build.

The AIR Assessment: A Blueprint, Not a Test

EdgeAI's Artificial Intelligence Readiness (AIR) assessment is the entry point for most member institutions. Clougherty is careful about how he frames it.

"The readiness assessment isn't a test you can fail. It's a structured conversation that produces a shared truth about where your institution actually stands — what's real, what's risky, what's next."

– Robert J. Clougherty, Ph.D.

AI Lead

Edge

Built around the same technology-governance-culture triangle, the process produces a prioritized path (what to start, what to stop, what to continue), a 12-month execution plan, and a longer-term capability horizon that is sequenced and realistic. Most importantly, it produces alignment: a shared language and shared framework that lets the provost, the CIO, the CFO, and the faculty senate have a productive conversation about AI instead of talking past each other.

To a president or provost who is unsure whether their institution is ready to take that step, Clougherty's answer is direct: "The question isn't whether your institution is ready. The question is whether you can afford not to find out."

Honoring Academic Culture — Not Steamrolling It

Higher education has deeply held values around academic freedom, shared governance, and equity. AI can reinforce or undermine all of them. Clougherty's thinking here is shaped as much by lived experience as by theory.

He recalls moving a campus online in the early days of digital learning, when faculty were so suspicious of the effort that a colleague once confided to him — not knowing he was part of it — that the provost was meeting in a basement to plan how to "replace faculty with computers." The anecdote still makes him laugh. But the lesson it carries is serious: institutions that succeeded in online learning were the ones that gave faculty a meaningful role in shaping the pedagogy. The same principle applies to AI.

Edge AI's governing philosophy is captured in a phrase Clougherty uses often: disrupt your process, don't disrupt your culture. Governance frameworks that are imposed from the top without meaningful faculty and staff engagement produce resistance, resentment, and compliance theater. But institutions that adopt AI primarily as an efficiency mechanism — without addressing the deeper questions of learning, meaning, and institutional identity — risk hollowing themselves out.

"They'll become more efficient at producing outputs whose meaning is increasingly unclear," shares Bob. "That's not a technology problem. That's an organizational identity problem."

Building for the Next Wave, Not Just the Current One

Clougherty is candid about the pace of change. No institution will keep up with AI release cycles where new models drop what feels like every 48 hours. Trying to do so is a losing strategy. The goal is adaptive capacity.

He points to a practical example: a student-facing AI system he built for a client using retrieval-augmented generation (RAG), structured so that when the student handbook is updated, the institution simply drops the new file into a folder — and the system updates accordingly. No vendor contract renegotiation. No IT overhaul. Just a system designed to adapt. "Survival doesn't go to the strongest or the smartest," he explains. "It goes to the most adaptive to change."

The cultural and governance infrastructure — those other two thirds of the triangle — are what determine whether an institution can absorb the next wave of AI, and the one after that. They cannot be afterthoughts bolted on behind the technology. They have to be designed deliberately, from the start, as the infrastructure that makes everything else sustainable.

There is no Middle

Three years from now, what does success look like? Clougherty has thought carefully about this, and his answer reflects just how differently he thinks about planning in the AI era. "Three AI years is not three calendar years in any meaningful planning sense," notes Bob. "The target is moving faster than any fixed plan."

The marker he points to is not a list of tools deployed or pilots completed. It is institutional capacity: member institutions that can evaluate, absorb, and critically deploy AI they haven't even seen yet. Campuses where the discourse has shifted from should we use AI to how do we best use AI to fulfill our mission. Institutions making AI decisions from a coherent identity rather than scrambling to keep up.

That vision maps directly to the thesis of his most recent long-form essay, There Is No Middle — a piece aimed squarely at presidents and provosts wrestling with whether to commit. The argument, plainly stated: you cannot invest seriously in AI while holding tight to legacy systems built for a different era. The strategic logics are incompatible. Half-measures exhaust the people asked to maintain the contradiction without advancing either goal.

For Edge member institutions, Clougherty's arrival signals something important: this is not a moment for another pilot. It is a moment for the kind of deliberate, mission-grounded institutional design that only happens when leadership decides to commit. Edge is here to help make that commitment actionable.